Each user is unique and so the optimal design for each user is different. But we cannot design a different product for each user. The adaptive games for learning project explored game features to tackle this challenge. I helped design and research adaptive and adaptable game features for an executive functions training game called All you can E.T.

Summary

Contributions: Research lead, Research design, Psychological design, Data collection, Data analysis, Scientific writing, Level Design, and Adaptive design

– I designed adaptive and adaptable features for a cognitive training game based on psychological research.

– We conducted research studies with these game features and published experimental and theoretical work in journals and book chapters.

– I led a large scale research study comparing the adaptive and adaptable versions of the game with a non-adaptive version and an active control group.

Related Publications:

– Dissertation: Improving game-based executive functions training with adaptivity and adaptability. Request Manuscript

– Cognitive Development: The Effect of Adaptive Difficulty Adjustment on the Effectiveness of a Game to Develop Executive Function Skills for Learners of Different Ages. Access article

– Journal of Research on Technology in Education: Adaptive learning technologies. [Manuscript accepted]

– Handbook of Game-based learning: Adaptivity in learning games. Access chapter

– Jean Piaget Society 2018: The effect of adaptive difficulty adjustment on the effectiveness of a digital game to develop executive functions. [Conference paper]

Background & Goal

The goal of this project was to improve outcomes of an executive functions training game. Executive functions (EF) are core cognitive process that regulate attention and memory. EF are important because they influence academic outcomes and social well-being. Previous work suggested that focused gameplay can improve EF if players are challenged appropriately. It is important to constantly challenge players based on their initial skill level and their improvement during gameplay. In this context, the project goals were:

– Design and evaluate two features for adjusting game difficulty: adaptive and adaptable

– Observe the efficiency of these features in adjusting game difficulty to consistently challenge players and maintain motivation

– Test if consistently challenging players improves game-based EF training outcomes.

Design

All you can E.T. (AYCET) is an executive function training game developed by the Consortium for Research and Evaluation of Advanced Technologies in Education (CREATE). AYCET enhances players’ shifting skills. Shifting is a sub-skill of EF related to switching between mental sets. It is the ability to adopt new information while disregarding old conflicting information. In this game, players feed aliens of different colors (blue vs orange) and features (one eye vs two eye), with the appropriate food items (milkshake vs cupcakes). Players must do it quickly before the aliens disappear through the bottom of the screen. The correct food item depends on the current feeding rule. For example, feed cupcakes to blue aliens and feed milkshakes to orange aliens. These rules change frequently, for example: feed milkshake to blue aliens and feed cupcakes to orange aliens. To follow these rules, players have to take actions by switching between distinct mental sets. This trains their shifting skills.

Adaptive and adaptable versions

The adaptive version customizes game difficulty with the help of an algorithm. The algorithm uses player performance metrics as input to adjust the game difficulty. This system makes macro and micro level adjustments. That is, the system adjusted makes large adjustments at a slow pace (macro), as well as quick small adjustments (micro). The Macro adjustments are based on players’ accuracy and change the game rules between levels. This helps players engage with achievable but challenging game levels. The Micro changes are based on players’ reaction time and change the game speed during each game level. This way, players need to feed aliens quickly, and are challenged throughout gameplay. The adaptable version uses a different approach in adjusting difficulty. In this version, players are given control of their gameplay. Here, macro level changes are made similar to the adaptive version. But, the adjustments are made by the players instead of an algorithm. The players are asked at the end of each level to increase, decrease, or maintain the difficulty level.

Research

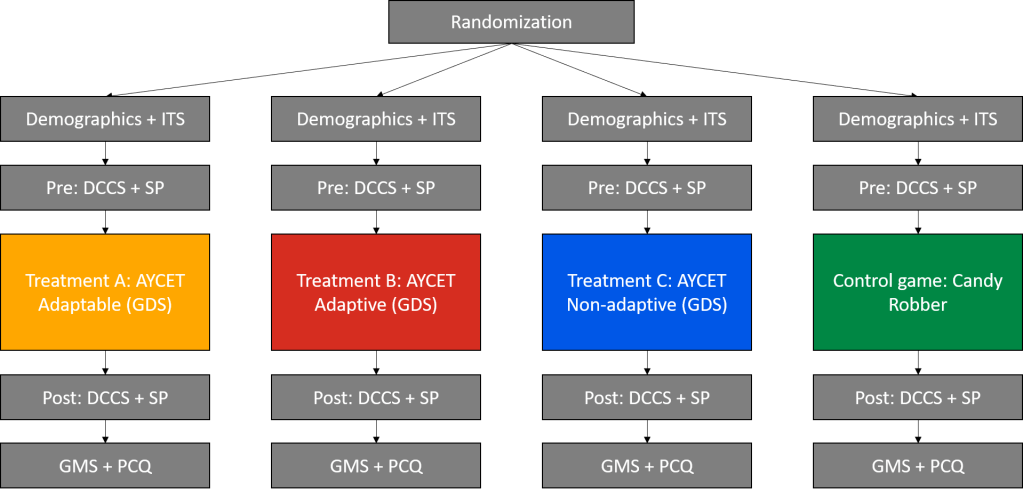

I conducted an experiment to test if adaptive features improve game-based EF training. The study was conducted with 317 high school students, and was part of my dissertation work. The figure above shows the research procedure and the four treatment conditions. The study was designed to test the direct influence of adaptive and adaptable features on training outcomes. With this goal, a non-adaptive version (for baseline measure) and an active-control group (to tease apart practice effects) were included in the experiment. Random assignment helped avoid a self-selection bias, and ensured that all types of participants were (approximately) equally distributed between the treatment conditions. I included a Demographics survey, an Intelligence Theory Survey (ITS), a Game motivation survey (GMS), and a Perceived Control Questionnaire to analyze important player characteristics. The dimensional card sorting task (DCCS), and the Stroop task (SP) were used to measure EF improvements outside of the game. I also included a game difficulty survey (GDS) to get a direct report of game difficulty from the players.

Data analysis

Data were analyzed to gain insights into: a) player background, b) differences in gameplay, and c) differences in task outcomes. The results were inconsistent with previous work, and promoted further investigation of gameplay data leading to part d) exploratory analysis. I conducted analysis of covariance (ANCOVA), multiple regression, and paired t-tests for testing experimental hypothesis. I used data visualization techniques including density plots, stacked bar plots, temporal line graphs, and side-by-side boxplots to illustrate key findings. Details of the experimental findings can be found in the publications reporting these results. Parts of the visual analyses related to this work are shared below.

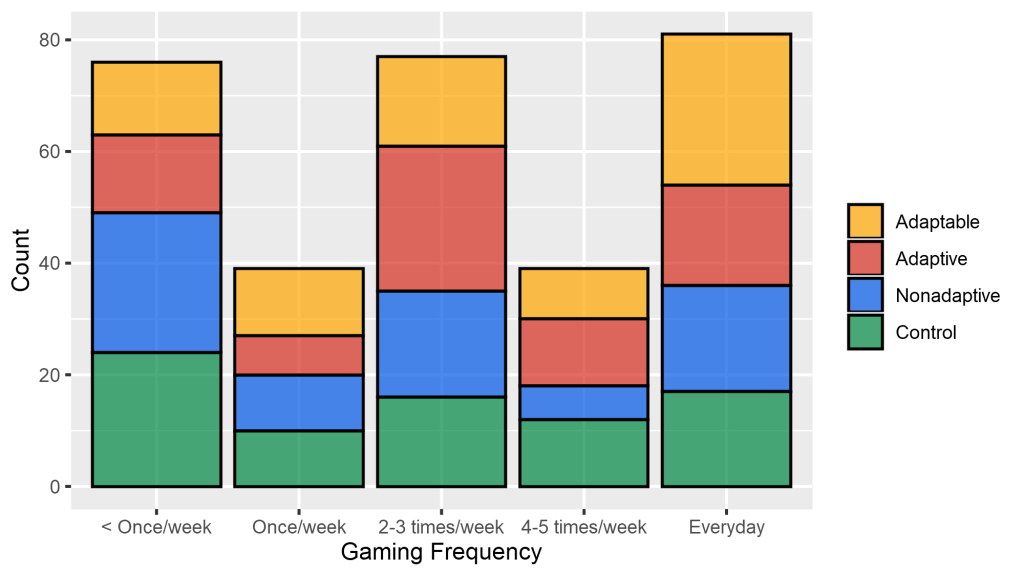

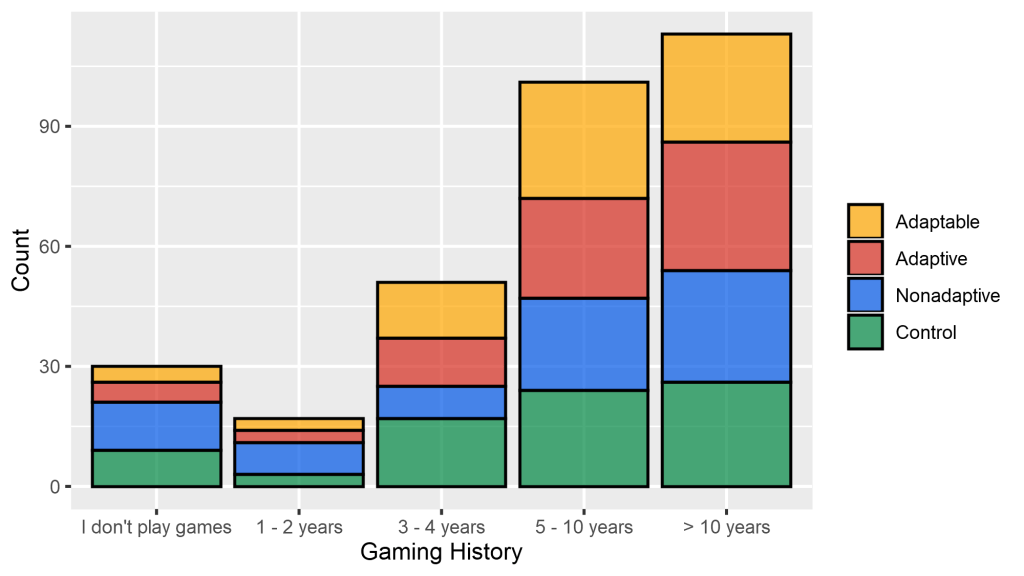

a) Player background

Player background can help understand differences in gameplay and in outcomes. For example, frequent gamers may be familiar with some game mechanics and engage with the game more readily. The visuals shared above highlight two things. First, they show the gaming experience of all participants. When ignoring the colors and only observing the bars, we can see the overall differences in the gaming experience of all participants. Second, they show that all treatment groups had approximately equal proportion of players with different levels of gaming frequency and history. When taking colors into consideration, we can see that players with different gaming frequencies and histories were evenly assigned to all treatment groups.

b) Gameplay analysis

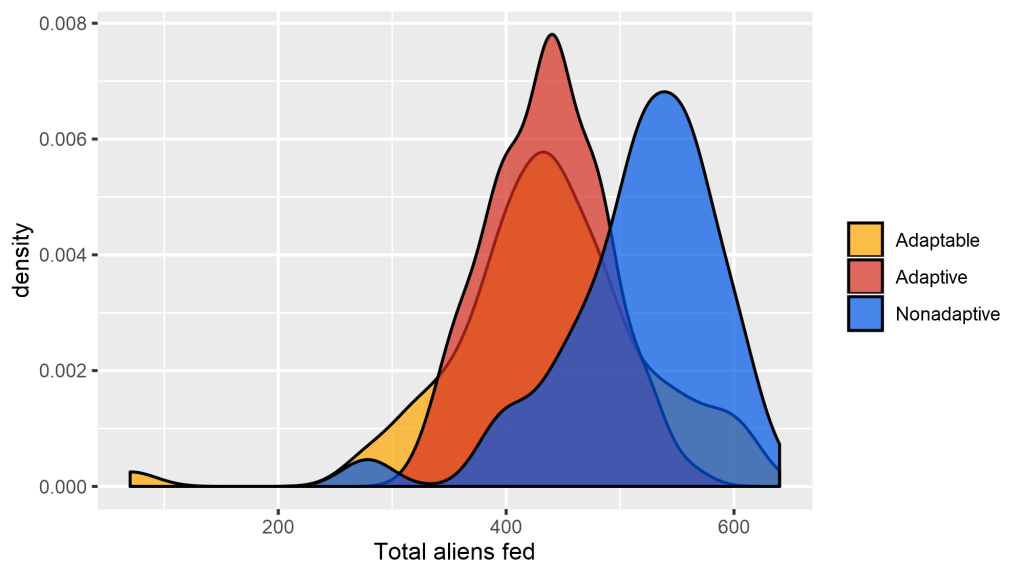

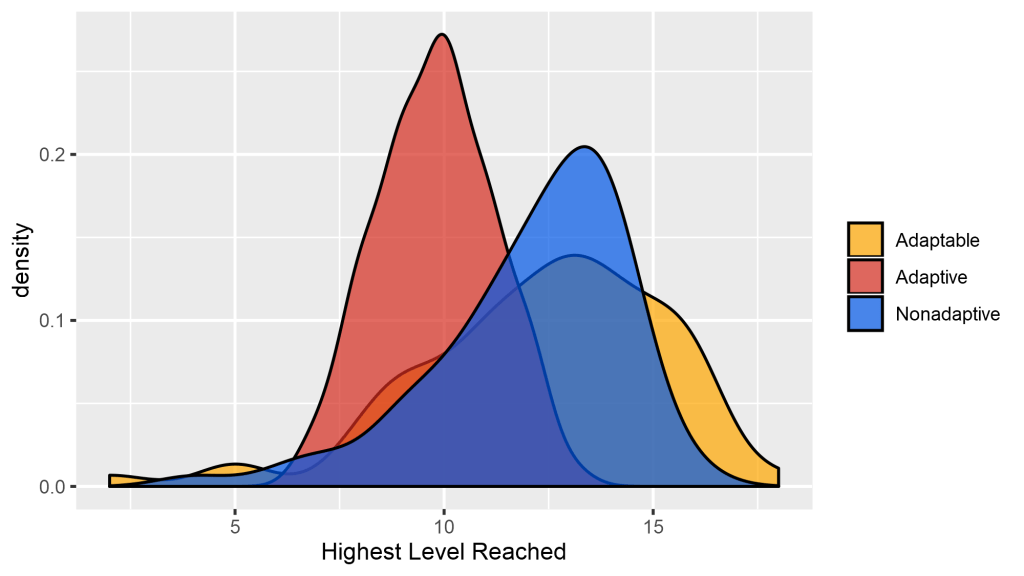

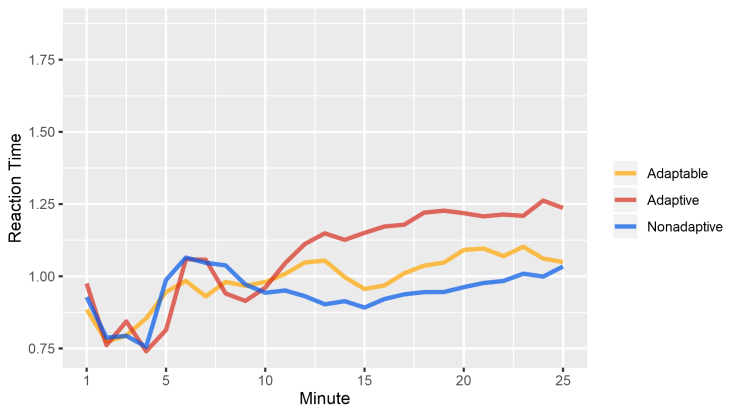

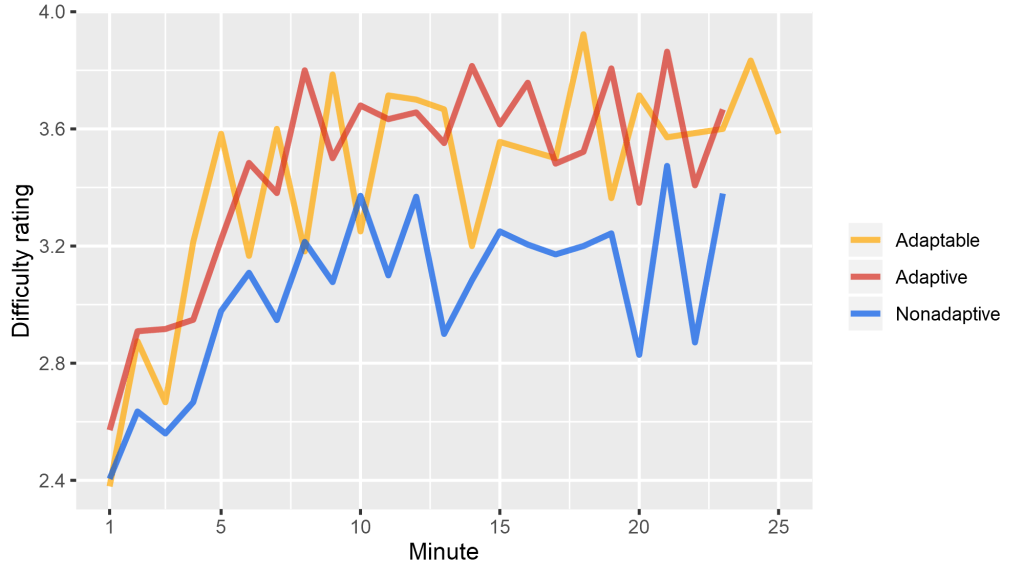

One of the research goals was to observe the influence of adaptivity and adaptability on gameplay. Gamelog data were used to calculate game variables including total aliens fed, highest level reached, accuracy, reaction time, game speed, game complexity, and difficulty ratings. Two methods of analysis were used: composite and temporal. The composite analysis revealed overall differences in gameplay between different treatment groups. For example, the density plots shared above show overall differences in total aliens fed and highest level reached by players. Temporal analysis showed variations in gameplay over 25 minutes of gameplay. These analysis helped understand how players in different groups performed over time. The line graphs shared below are an example of temporal analysis conducted for this project. The figures show differences in reaction time and difficulty ratings between groups over time.

c) Task analysis

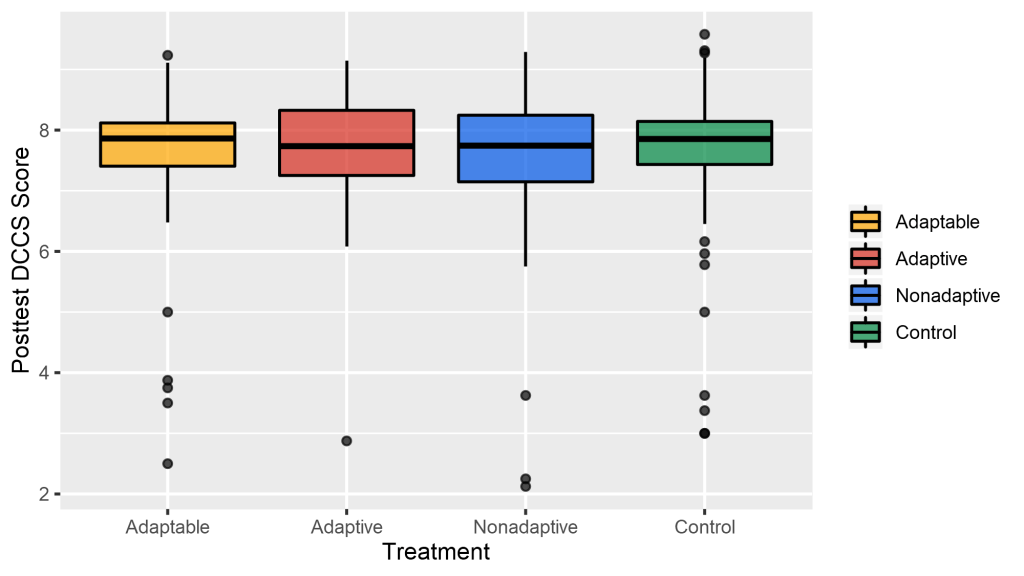

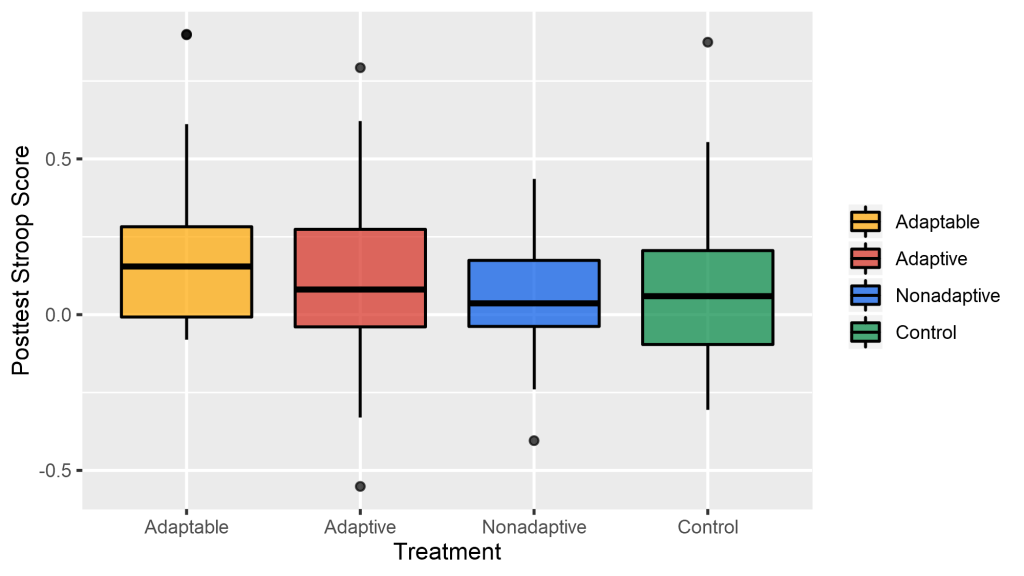

The primary goal of this project was to observe if adaptivity and adaptability improve EF training outcomes. I analyzed data from the DCCS and Stroop tasks to answer this question. To answer this experimental question i used analysis of covariance to compare differences between treatment groups. Details of these analysis can be accessed in the shared publications. A related visualization of the post test task scores is provided in the figures below.

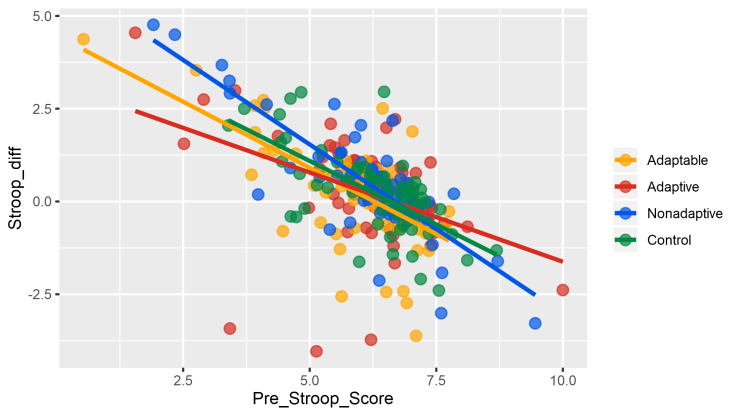

d) Exploratory analysis

Exploratory analysis were conducted because results from the task data were inconsistent with previous work. According to the national institute of health an increase in DCCS is expected when users simply retake the test. Instead, a decrease in score was found for a large portion of players in this study. Follow-up analysis showed that players’ performance gradually decreased over five minute chunks of gameplay. That is, players became increasingly fatigued because of demanding gameplay, which led to a depletion of their cognitive resources. The figures below visualize this depletion.

Results

Results from this study were as follows:

1. The adaptive and adaptable versions were able to constantly challenge players while maintaining their motivation. Results from players’ difficulty ratings as well as gamelog data showed that players in the adaptive and adaptable groups engaged in more difficulty gameplay without a reduction in motivation. This finding highlighted the benefits of using these features in games for learning.

2. The improvement in gameplay did not improve the task scores. In fact, the task scores decreased for a large number of participants. This finding was inconsistent with previous studies with AYCET and NIH data.

3. Exploratory analysis revealed that players experienced a depletion of cognitive resources over 25 minutes of gameplay. This finding informed further research regarding the importance of duration and schedule of gameplay.